An international group of renowned statisticians from the University of Sydney, Northwestern University and the University of Texas have collaborated to fully investigate the predictive performance of the COVID-19 model developed by Institute for Health Metrics and Evaluation (IHME) – which provides forecasts for ventilator use and hospital beds in the United States.

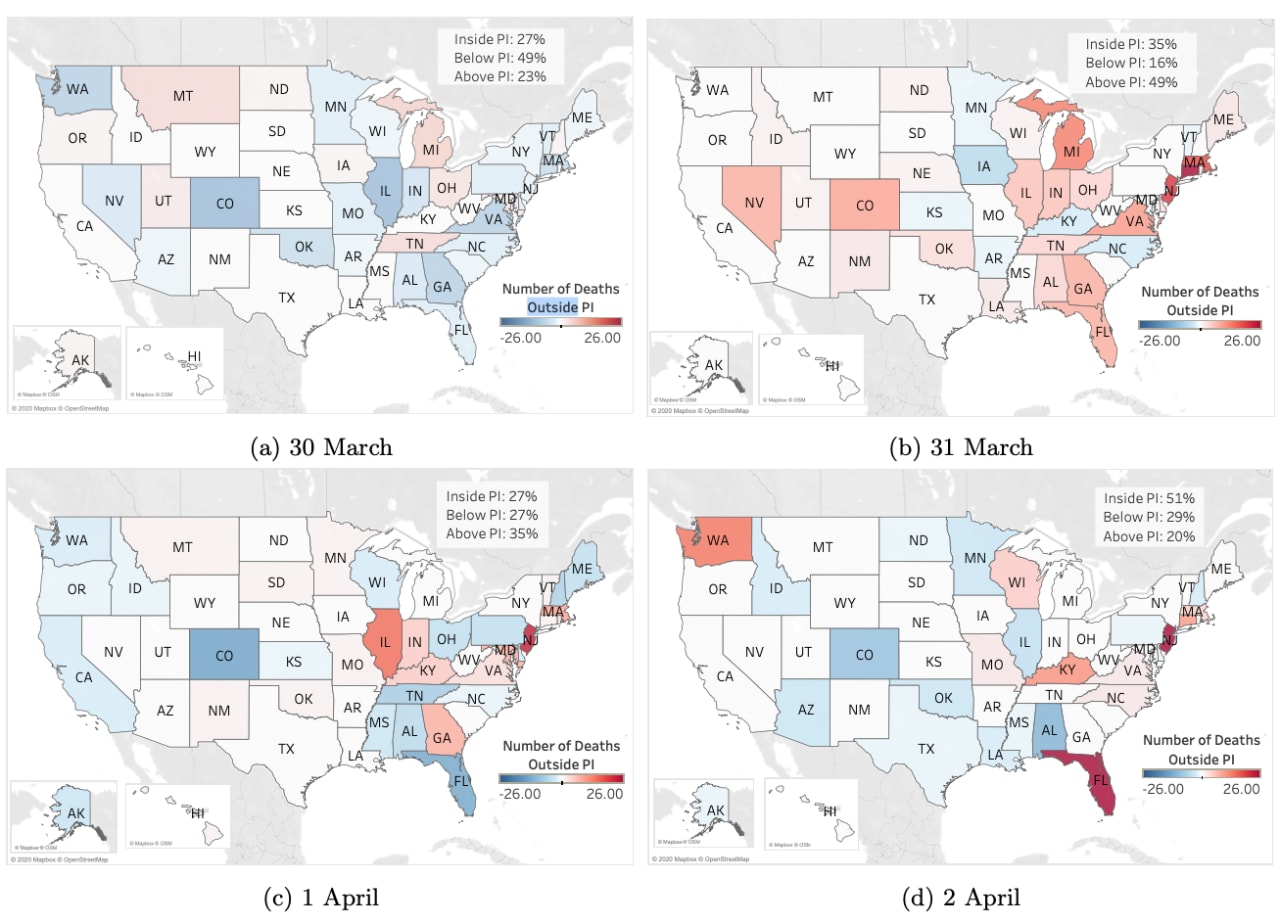

Published on prepublication server arXiv, the researchers found that the IHME model substantially underestimates the uncertainty associated with COVID-19 deaths.

Seventy percent of US states had an actual death rate outside the 95 percent prediction interval for that state, casting doubt on whether the model is suitable to inform COVID-19 resource allocation.

The model, which provides forecasts on a state-by-state basis across the United States, has been circulated widely by the media and on social media, and has informed policy decisions at the highest levels, having been cited at a White House press conference on 31 March 2020.

“The discrepancy between predicted deaths and the actual death rate in the US has serious implications for the United States’ government’s future planning and provision of ventilators, PPE, and the staffing of medical professionals equipped to respond to this pandemic,” said University of Sydney statistician and Director of the Centre for Translational Data Science, Professor Sally Cripps.

“The level of uncertainty implied by the model casts doubt on its usefulness useful to drive the development of health, social, and economic policies,” she said.

Professor Martin Tanner from Northwestern University also said the model did not have the capacity to make long term projections.

“I am concerned that if the UW-IHME model has had difficulty in predicting the next day, how will the predictions fair over the long term,” said Professor Tanner.

Understanding prediction intervals

“In making a prediction – often called a point estimate – about the rate of spread and death rate of a new virus in New York, it is widely understood that the prediction carries uncertainty,” said Professor Sally Cripps.

“Statisticians give a range, called a prediction interval, in which the actual future values are likely to lie. This involves estimating uncertainty. A 95 percent prediction interval is an interval where we would expect 95% of actual future values to lie,” she said.

“IHME’s model gives 95 percent prediction intervals for the number of deaths in each state. If the uncertainty is estimated correctly then we would expect that 95 percent of states to have actual values lie in those intervals,” she said.

“But we don’t. In fact, only 30 percent of US states have actual death counts which lie within the 95 percent prediction intervals, while 70 percent lie outside that interval. So either the uncertainty is under-estimated or the point estimate is inaccurate, or both” she said.

DISCLOSURE:

The paper, Learning as We Go: An Examination of the Statistical Accuracy of COVID19 Daily Death Count Predictions is not yet peer reviewed. The research has been submitted to Plos Medicine and has been published on pre-publication server arXIV.

Professor Sally Cripps, Dr Roman Marchant, Professor Noelle Samia, Professor Ori Rosen and Professor Martin Tanner would like to thank the authors of IHME for making their predictions and data publicly available. The authors agree with the statement on their website that having more

timely, high-quality data is vital for all modeling endeavors, but its importance is dramatically higher when trying to quantify how a new disease could affect lives. Without access to the data and predictions this analysis would not have been possible.